What Is a Virtual NAS and What Features & Components Does It Offer

In The Mid-1980s, Organizations Increased Employee Productivity By Providing Computing Resources On Desktop Systems, But The Workload Of IT Managers Doubled.

One of the problems that IT professionals struggled with was handling and managing the storage space used by users.

Entering the 1990s, networks, end users, and data management issues, achieving the best performance, scaling, reducing downtime, and recoverability took a new shape.

This is when network-attached storage (NAS) technology emerged, although it significantly differed from what we call NAS today.

To familiarize ourselves with virtual NAS, we must first examine what capabilities traditional NAS mechanisms offer and what limitations they face. This is what finally made virtualization technology enter the world of network storage.

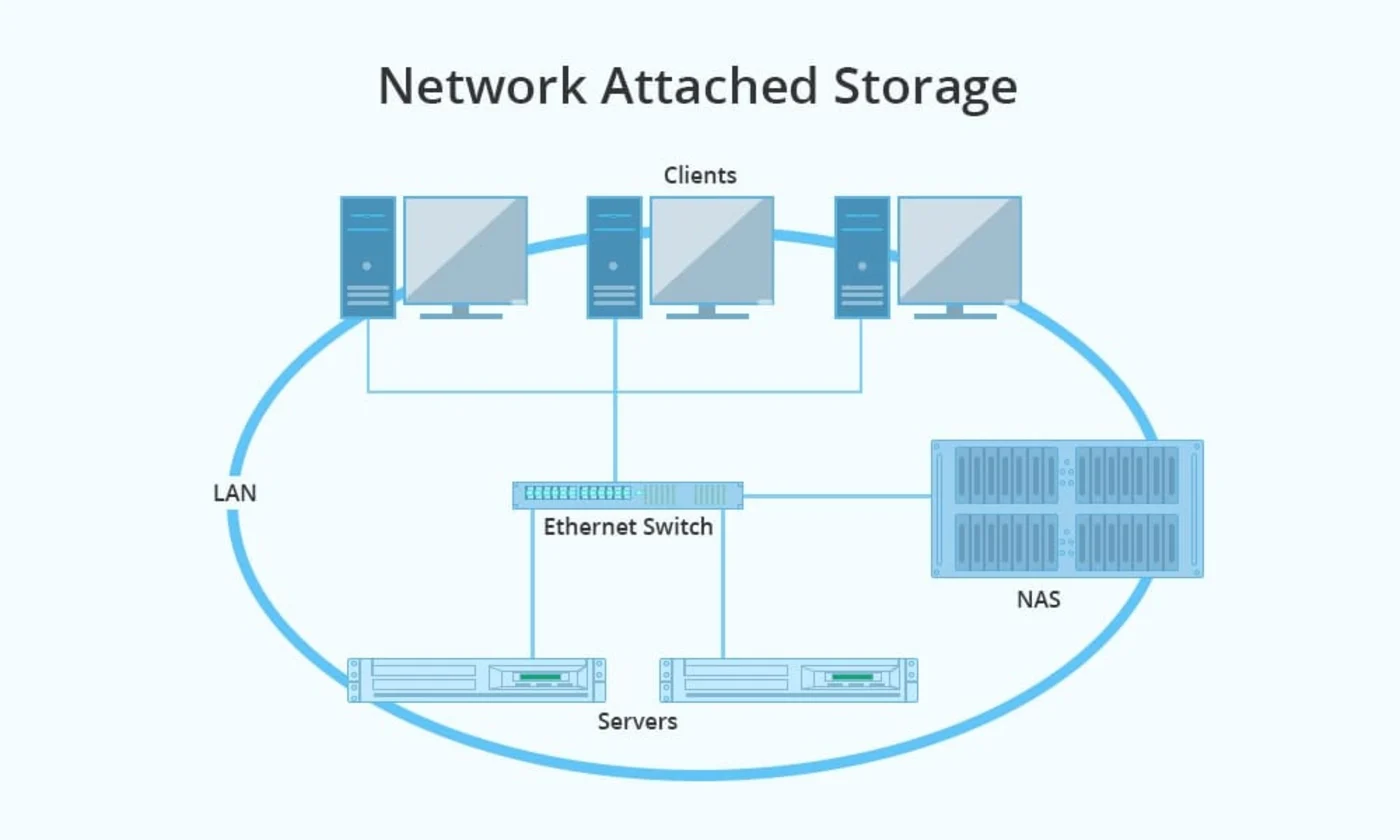

What is network-attached storage?

Storage connected to a virtual network (Virtual NAS) is a virtual machine (Virtual Machine) available to users as a file server. In this case, NAS is provided to clients as dedicated media with a unique address that hosts data storage services. Virtual NAS has several significant advantages over traditional block storage in network environments.

Virtual NAS is managed through an interface similar to virtual servers in the network. Hence, it can be transferred from one physical host to another. This feature simplifies maintenance, minimizes downtime, and helps network experts recover and restore conditions during cyberattacks or problems.

In case of a sudden and dramatic increase in demand for network resources, the virtual NAS can be quickly transferred to a more robust and faster host to provide the required services to all users without interruption. Also, virtual NAS delivers a simple mechanism for scalability as the amount of stored data increases.

The first generation of NAS servers

The physical location where the files were stored. Early-generation NAS servers were mostly generic servers configured to do one specific job of serving files. These servers were good at doing a particular task. Using advanced techniques that simplified the configuration and installation processes, NAS servers allowed IT administrators to overcome management, configuration, and availability difficulties and focus on managing files and file structures, spending less time managing storage media.

Adding a NAS server to the network was simple; it was not complicated to manage and maintain. It was mainly used for classifying and sharing files and consolidating storage space. It was installed in just a few minutes and required minimal preparation. Also, it supported the data-sharing feature in a heterogeneous environment.

However, as business activities expanded and enterprise network architectures became more complex, NAS’s limitations became apparent. Early-generation NAS servers did not provide good scalability and could not meet the needs of large organizations.

One of the biggest obstacles to the scalability of early NAS was the inability of the NAS server’s file system.

This issue significantly impacted task availability, manner, and time. When an organization needed storage space or better performance, it had to buy and add a new server to the network.

The above approach included adding a server, replacing the old server with a new instance, moving applications to the new server, copying existing data to the new server, changing the sharing mechanism, defining a new server for desktop systems to locate the latest data, and reconfiguring the new resources.

This seriously disrupted an organization’s business activities for at least a few days. Since scalability was such a nightmare, large organizations used NAS servers with capacity and processing power beyond their business needs to avoid implementing the network from scratch if they needed more capacity or performance.

As you might have guessed, this process was inefficient and costly. Overall, the first generation of NAS that offered functional capabilities failed to keep up with technological advancements. As a result, they quickly lost the charm and ease of use that had once made them so successful.

The rise of the SAN

While NAS servers were used at the departmental and group scale, another storage architecture emerged at the enterprise level called the Storage Area Network (SAN). The SAN decoupled the servers from all storage, so all storage devices were deployed on Block-Oriented fabric, a context to which all servers had access.

Unlike NAS, which provides file-based management, SANs manage storage resources at the block and device level. No file systems are associated with the SAN, which allows SANs to support capacity expansion more easily.

Also, connecting new servers to the storage network became simpler. The above approach solved the problems of the first generation of NASIt regarding scalability, availability, and performance.

However, SAN management challenges became apparent as SAN capacity and complexity increased. Since the SAN is implemented at the block and device level and does not know about files or file systems, network administrators had to configure the SAN at the lowest level, which is the logical unit number (LUN), switch ports, disk drives, storage ( SCSI), Fiber Channel, HBA cards, etc., so that all data was managed at the block level, not the file level.

The problem with the SAN Fabric was that it quickly got out of control as it grew.

The technology of “storage virtualization” (Storage Virtualization) was invented to simplify SAN management, and it quickly attracted the attention of organizations.

The above technology allows network administrators to implement storage equipment as a public storage pool. SAN virtualization software creates a virtual storage layer between the server operating system and physical storage. This storage layer creates a virtual collection presented to the server operating system as a sizeable upgradeable volume or disk.

In the low-level virtualization layer, it is possible to dynamically increase the storage space’s physical capacity without changing the architecture or applications.

The performance of virtualization technology in interaction with SANs was so efficient that network administrators could learn how to manage physical and virtual volumes quickly. SANs have once again attracted the attention of companies as an efficient storage mechanism with simple management. Ease of use has made virtualization used in other areas such as server virtualization, network, etc.

Some of these virtualization approaches performed better than similar examples and helped network administrators perform their tasks more simply, but they failed to remove the complexity of managing storage space. For this reason, storage professionals were still looking for a solution to overcome the complexity problem.

Present

Today’s NAS systems have more capacity and performance than their predecessors. Due to increased performance, today’s NAS solutions are used in various fields that were far from expected in the past.

Most companies offer different approaches to solving NAS scalability and development issues. For example, some have developed more significant and faster storage; some have focused on developing specialized equipment in this field, such as sensor switches; others have developed virtualization solutions; and others have set up software-driven NAS servers that are host-based, context-based, or stand-alone.

The problem with some of the above solutions is that most new NAS servers use the same architecture as the NAS from the nineties or add additional software or hardware layers to the storage architecture, adding more complexity and slag.

Some of the solutions offered are related to the NAS.

Monolithic Server-Based

Integrated designs offer traditional NAS benefits, such as simple deployment and centralized file storage. This NAS design is centered around Thin Server, a customized operating system for fast input and output operations management, and support for RAID technology for data protection. Network clients access the NAS server through a 10/100/1000 Mbps Ethernet connection.

Newer architectures take advantage of increased capacity and improved performance more efficiently. Still, these designs cannot overcome the limitations of a NAS server that requires a single file system or cannot provide centralized management of systems distributed across different geographic regions.

Software Based

Software-based solutions offer software packages that turn standard Windows and Unix servers into NAS devices. This software provides functions and capabilities for NAS management.

Since the above solution is implemented on the system hardware, called bare metal, and is placed directly on the hardware or on top of the operating system, it performs poorly compared to professional commercial NAS servers.

Similar to monolithic NAS architectures, software-based solutions face the same scalability, performance, and management issues that traditional NAS designs have struggled with. However, they perform well in some scenarios.

Storage Aggregation (NAS Aggregation)

Storage Aggregation works based on traditional NAS server aggregation and aggregates or virtualizes client storage. The above solution has many problems. It doubles the complexity of design and implementation, reduces performance (because one of the aggregated equipment may have low performance), increases the delay in data processing, and may destroy data integrity.

Storage aggregation mechanisms solve some management and scaling issues, but potentially create more problems. Although the above architecture is an evolved NAS, it still faces vexing scaling and management issues.

The next generation of network-attached storage

Such issues have caused the world of storage and networking to look for a comprehensive and integrated storage solution for storage space. A solution that can respond to growing capacities and provides efficient management features that match the changes in the world of information technology.

A solution that can integrate with virtualization technology provides the simplest form of management, eliminates inefficiencies, and minimizes additional costs.

Various companies, such as QNAP, Synology, HP, Spinnaker Networks, etc., have developed next-generation NAS to meet market demand. These systems offer scalability and performance to meet enterprise and service provider needs.

These companies introduced proprietary operating systems with the most built-in virtual features, a complete solution that can overcome scaling, performance, and management issues.

For example, Spinnaker’s SpinFS operating system implements a two-tier distributed architecture that separates network operations from disk operations. SpinFS supports the clustering of SpinServers and allows for increased capacity without disrupting network performance.

In general, the operating systems that provide virtualization capabilities for NASs have standard features, the most important of which are the following:

- Providing a distributed global file system on multiple servers.

- Implementation of application-specific storage repositories.

- Online transfer of data without interruption.

- Grouping clients to share resources among them securely.

- Grouping clients to manage them logically.

- Provide detailed reports related to port problems.

- Load distribution among servers without disrupting clients’ work activities.

Virtual features

One of the essential points you should pay attention to when using virtualization technology in connection with NAS is the “virtual file system” (VFS) called the Virtual File System, which is used as a global storage container by the storage system. A VFS may contain multiple files that can be viewed and edited as a single storage container.

A VFS may be assigned to a user, a group of users, or applications. It best supports the concept of virtualization as it is equipped with quotas and permission allocation. To access files, clients use a communication channel to interact with a standard NAS server.

In this case, the client files are stored in a virtual file system hosted on the server. The operating system transfers information to the storage and connects clients with the virtual file system.

The virtual file system plays a vital role in eliminating location dependence. As a result, users will not face problems such as communication channel failure or data loss. It also makes reconfiguration and scaling the easiest because files are accessed directly from the physical storage media.

virtual server

A virtual server (VS) logically groups different storage resources into a single virtual server so that users within an organization can access it. A virtual server is typically configured with client access ports, a virtual file system, and network administrator tools.

Virtual servers can connect several physical servers geographically located in different regions. In this case, the virtual server provides hybrid storage resources to the clients.

Virtual servers increase security and simplify management by logically dividing and isolating users into shared and separate groups. Each virtual server provides workgroups with a set of network ports and virtual file systems.

In this mode, only requests sent through ports configured on the virtual server are accepted and forwarded to VFSs stored on the virtual server. Note that users only see VFSs associated with the virtual server.

Virtual Interface

The virtual interface provides the client access to the virtual server, which is mapped to a physical interface on the server. Then, the virtual interface is assigned to a specific user or user group. Interestingly, end users do not know about the existence of virtual servers or virtual interfaces. Typically, a virtual server may have one or more dedicated virtual interfaces.

A virtual NAS solution is suitable for companies and workgroups. One of the most efficient solutions for acquiring a virtual NAS is QuTScloud software, a virtual tool based on QNAP’s QTS operating system. Companies that need to expand resources, seek flexibility, and use QNAP products can use QuTScloud to deploy and manage business data in public clouds and virtualization environments.

The significant advantage of the above software is that it enables faster data transfer between clouds, and the management of cloud storage tanks is centralized and straightforward. The software supports SMB, FTP, AFP, NFS, and WebDAV protocols to connect to cloud storage gateways, access the cloud and virtual storage space, and share data.

QuTScloud supports standard protocols such as CIFS/SMB, NFS, AFP, and iSCSI to access the QuTScloud cloud NAS. In addition, File Station provides a web-based dashboard for managing and sharing files.

The benefits of implementing virtual NAS using QuTScloud should be mentioned as follows:

- Optimal use of resources: Network administrators can run QuTScloud virtual machines on private enterprise servers to optimize system resources.

- Agility and cost savings: QuTScloud simplifies access to QTS services and NAS utility features.

- Cloud Storage Gateways: QuTScloud provides capabilities for organizations looking for private and hybrid cloud solutions.

FAQ

What exactly is a Virtual NAS?

It is a virtual machine or appliance that provides NAS file-services (such as NFS, SMB/CIFS) on virtualised or cloud infrastructure, enabling migration of NAS functionality off dedicated hardware.

Which major features distinguish a Virtual NAS from traditional NAS hardware?

Key features include high availability (HA) clustering, snapshots, deduplication/compression, thin-provisioning, multi-protocol access (NFS, SMB, iSCSI), Active Directory/LDAP integration, and cloud-tier or object-storage backends.

What are the fundamental components required to build or run a Virtual NAS?

Components typically include: A virtualized compute layer (VM or container) hosting the NAS software (CPU, memory). Storage media (HDDs, SSDs, or hybrid) allocated / attached to the VM. Network interface(s) (Ethernet, potentially 10GbE+) for file-service throughput. NAS software/OS providing file-system, RAID/pooling, user/permissions management, and service protocols.