A New Innovation In Google’s Products And Technologies, Or ATAP, Can Detect People’s Body Movements And Body Language Without The Use Of A Camera And Relying On Radar Waves.

Body Language, Google’s research team, is working on technologies that allow computers to respond to radar around people and movements. Imagine your laptop decides not to receive a voice message because he knows you are not sitting at your desk at that moment, or suppose when you get up to respond to the sound of the door, your TV automatically stops showing movies from Netflix or other networks. When you return to your couch, the movie starts again.

To be broadcast. Imagine a future in which computers understand human social behavior and become more considerate companions for us.

The notion that a computer monitors every single movement of a person may seem a bit unpleasant and similar to a science fiction movie script. Still, when you know that computers do not use cameras to detect where people are moving, your worries may alleviate a little. This unpleasant feeling or the initial guard may be slightly adjusted.

Google has decided to use radar instead of a camera to track users’ movements.

Google’s advanced product and technology division, also known as ATAP for short, which has previously worked on bizarre projects such as the touch-sensitive denim jacket, has turned its attention to something else over the past few years.

ITP engineers hope to use radars to develop a system in which computers respond appropriately by detecting behaviors and speculating about users’ needs. Of course, this is not the first time that Google has used radar to raise awareness about its products.

In 2015, Google unveiled Solly. A cell is a sensor that uses radar electromagnetic waves to detect the postures and movements of the human body accurately. First, this system in the Google Pixel 4 detects simple hand movements.

Recently, Google has used radar sensors in the second generation of its brilliant display called Nastahab to detect people’s movements and breathing rhythm lying on the side of the screen. Unlike gadgets such as smartwatches, this device can monitor the activities of people in sleep without the need for physical contact. Pixel 4 users could use simple commands such as stopping music or delaying alarms with their hand gestures without physically touching the phone.

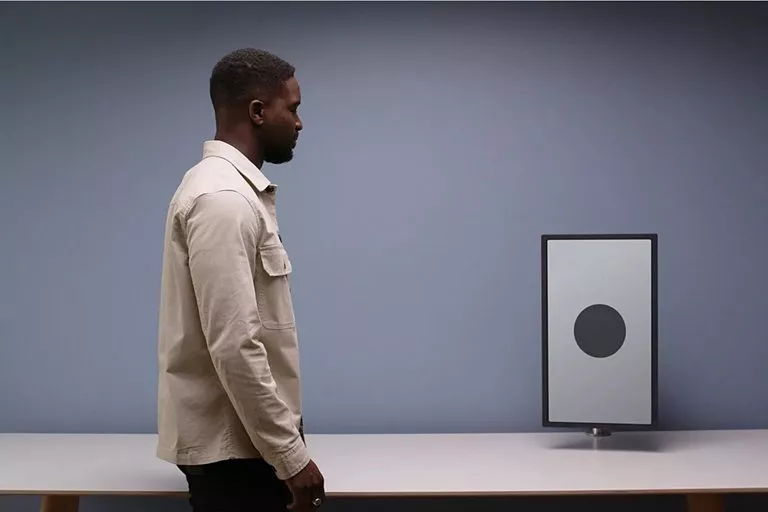

This gif shows the approach of humans to the space around the computer and the operation of the radar.

As the person approaches the computer, the distance data and other variables are changed on the screen.

The EITP team has also used solo sensors in its new project. Still, instead of using sensor inputs to control the computer directly, ITEP engineers plan to use the data from the sensors to detect people’s daily movements and help computers make decisions.

Leonardo Giusti, Senior Design Officer at ITEP, says:

We believe that with the broader presence of technology in the daily lives of human beings, it is fair to ask technology itself to provide clues to some of our movements.

Suppose you plan to leave home and your mother reminds you to bring an umbrella; Because of the weather. With the help of this technology, your home thermostat may display the same message when you pass in front of it, or, for example, when the TV notices that you are asleep on the couch, it automatically lowers the volume.

Radar research

According to Geo, much of their research has been in geology. Domain science is the science that examines how humans use the space around them as a platform for social interaction.

You expect them to be more intimate and interactive when you approach someone. ITEP’s research team has used this behavior and other social cues and expectations to establish how humans interact with devices and define the private space.

Radars can detect when a person is approaching them and entering their privacy. Having such a capability means that the computer can do specific tasks in the right situations. For example, when the user approaches, he wakes the screen from sleep without having to press the physical buttons.

Currently, Google uses this interactive technology in its intelligent displays. Still, Google interacts with ultrasound or ultrasonic waves to calculate a person’s distance from the screen to interact with users instead of radar.

When Nastahab notices the user’s approach, it displays important notifications on its screens, such as calendar events and reminders, and other announcements.

However, recognizing the distance from the device alone is not enough; Because the person may have been just passing by the device at the time, or they may be looking the other way and not intending to interact with the computer at all at that time.

The solo sensor can evaluate some complex subtleties in movements and gestures, such as the body’s orientation and the possible path of motion of people, and the direction of a person’s face.

Also, the solo sensor can use machine learning algorithms to refine and screen the data to evaluate and detect these subtleties. Valuable information obtained from radars helps the Sensor sensor more accurately predict what users mean: whether or not the user intends to start interacting with the computer, and if so, what would probably be the best way to interact with it.

To improve the performance of the sensors, ITP team members performed several movements and gestures. They recorded these movements with a camera in their living room (they all had to stay home).

It was while the radar sensors were simultaneously analyzing their motion. Lauren Badal, the chief designer of ITEP Interactive Division, explains:

We moved in different directions and performed a series of movements. Since the systems were instantaneously recording and evaluating activities, we were able to compare the data obtained from the camera and sensors and take the following steps to increase the accuracy of the radar sensor design.

Lauren Badal also has a background in professional dance movements. He says that this process is very similar to the work of dance designers in developing the idea of a simple movement and turning it into a set of different movements by changing the position and orientation of the body.

Based on these studies, the ITEP research team defined several specific movements; Movements inspired by human nonverbal interactions with physical equipment, Such as approaching or moving away from a device, passing by it, turning its head towards the device or the other, and looking at that device.

Explains some examples of computer-human interaction through motion detection. If a device senses that the user is approaching it, it can activate the touch control device, or if the person moves towards the device, its display turns on and displays important notifications, or if the user leaves the room, the TV can program Stop at that moment, and after the person returns to the room, start the program from the moment of stopping.

And If a device detects that a person is passing by, it will not disturb them by displaying unimportant notifications.

If the user is watching the cooking video in the kitchen and when he goes to the cabinet to pick up the spices, the video playback will be cut off automatically until he returns to his place and shows his intention to continue watching the video.

When the user is talking on their mobile phone and looking at the bright display for a moment, the monitor can display the option to transfer a video call so that the user can continue the conversation visually with the monitor. Badal explains this:

These finger movements point to a future in which computers invisibly evaluate our natural tendencies. Our main idea is that computers should somehow only work in the background and help us in the right situations. We are pushing the boundaries and discovering all possible ways humans can interact with computers.

Closer computer to human

The use of radar to influence how computers react to human movements has its problems. For example, radars can detect multiple people in an environment. If these people are very close to each other, the radar will see them as amorphous masses; A problem that can interfere with the ability of devices to make decisions.

Given the problems facing the new generation of radar sensors, Badal has repeatedly stressed that the latest technology is still in the study phase and will not be used in the next generation of Google intelligent displays.

One of the advantages of using radar sensors is that radar-equipped devices can learn the movement pattern of people over time. According to Leonardo Giusti, this ability is one of the critical goals of the EITP roadmap, which can help develop new and healthy behavioral habits of users.

Suppose you go to your kitchen snack cabinet in the middle of the night, But suddenly your bright screen turns on in the kitchen and shows a big stop sign.

Intelligent devices and computers must strike a relative balance when predicting user behavior and anticipating operations. For example, someone might like to turn on the TV while cooking without intending to watch it. It is probably the case for most of us.

In this situation and other similar examples, radars cannot detect a person in the living room, and the TV will stop broadcasting instead of continuing.

Badal describes such situations as follows:

When we study highly invisible, inseparable, and fluid behavioral patterns, we need to strike the right balance between automation and user intervention in controlling the device.

When it comes to human interaction with the device in such situations, this interaction should be painless and have the same psychological and fluid behavioral patterns so that the user does not feel annoyed and tired of the inappropriate intervention of the device.

Therefore, in cases where the user expects more control over the device, we must ensure that he has access to several control levels and manual settings.

One of the reasons the ITEP team chose radar technology is its nature in support of privacy. Although radars collect valuable data on the position and movements of individuals, they are a reliable technology in the discussion of confidentiality. Also, radars have a period of slight latency and operate in the dark, and external factors such as sound and temperature do not affect their performance.

Unlike cameras, radars cannot collect or store clear images of the body, face, and anything else recognizable.

“Radar is more like an advanced motion sensor,” explains Giusti.

The detection range of the solo sensor is about 3 meters; Therefore, compared to most cameras, it has a small range of activity. However, several devices equipped with a solo sensor can cover the entire space of the house like a coordinated and effective network to detect the movements and position of people.

It is also important to note that the solo sensor used in Google’s bright display has local processing capability, and raw data is never sent to the cloud.

Chris Harrison, a researcher in the field of human-computer interaction at Carnegie Mellon University in Pittsburgh and director of the Futures Interface research group, believes that sooner or later, users will have to choose whether to buy Google products at the risk of exposing their privacy; After all, according to Harrison, no one in the world can match Google in terms of monetizing their customers.

However, Harrison believes that not using cameras in Google products reflects an approach to protecting the privacy and prioritizing users’ values. He adds:

There is no such thing as a privacy breach or privacy advocate.

Everything should be considered a spectrum with two privacy breaches or protections at the top and each digital product somewhere between the two.

Harrison believes that human interaction with computers will be the same in all aspects of technology that EITP researchers are looking for in the future. As the devices of everyday life inevitably become more equipped with sensors, so does the ability of these devices to understand human behavior. Harrison adds:

Humans are programmed to understand human behavior, and if computers ever succeed in fully deciphering this nonverbal interaction, situations can be pretty distressing for humans. Involving social and behavioral scientists in computer research can make such experiences more enjoyable and human.